The Future of Language Generation: Understanding GPT-3

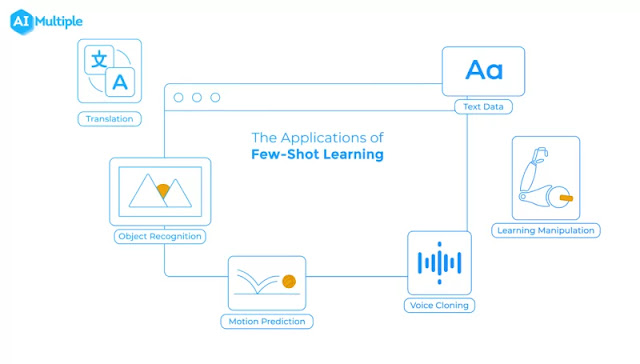

"The Future of Language Generation: Understanding GPT-3" Have you heard of GPT-3? It's the latest and greatest language generation model developed by OpenAI, and it's shaking up the world of natural language processing. GPT-3, or Generative Pre-trained Transformer 3, is the third iteration of the GPT series of language models. It boasts an impressive 175 billion parameters, making it one of the largest language models to date. But what does that mean for us? One of the key features of GPT-3 is its ability to perform a wide range of natural language processing tasks without additional fine-tuning or training. This is known as " few-shot learning ," where the model can quickly adapt to new tasks with just a few examples. This means that GPT-3 can write essays, compose poetry, and even code, all without the need ...

Comments

Post a Comment